Claude recently updated its interactive UI so that an interactive page can be streamed into the chat box claude:

At the same time I came across a project called Generative-UI-MCP. The author's idea is straightforward: use the MCP protocol to clone the whole “Claude can generate interactive UI” trick.

The project itself is not complicated, but it breaks down something that is usually hard to explain: what is actually new about Claude's interactive UI; why it is not as simple as “the AI wrote some front-end code for you”; and the first thing that has to be solved behind it—whether it is a rendering problem or a protocol problem.

I later re-dissected the whole process against a real SSE streaming log. After comparing the two sides, it becomes clearer: Claude-style streaming UI is essentially not “the model outputs HTML”, but “the model continuously outputs a UI protocol that the host can consume reliably”.

This article is about exactly that.

It is a continuously interactive UI page

The really new part of Claude's interactive UI / Artifacts is not “the AI generated a page”—people have done that long ago. The new part is that the generated thing can still be used, can continue to interact with the model, can mount tools, and update state.

This is completely different from “the AI wrote a piece of front-end code and you copy it out and run it”.

In the old way, the model is the starting point and ends after generation. In the new way, the model is a continuous participant in this UI session. User actions in the interface can go back to the model, and the new content returned by the model can partially update the interface, back and forth.

The closed loop looks like this:

Only when this cycle can run is it truly “interactive”. A piece of HTML with a few buttons is not interactive; events can flow back, that is.

A real streaming output makes this very clear

If you look at a real SSE streaming output, you will find that the model does not spit out a complete page at once, but continuously outputs different types of content blocks in the stream. The front-end receives and assembles them piece by piece, and then hands them to the corresponding tool for rendering.

After disassembling, there are roughly five steps.

Step 1: Load the UI generation spec first

At the beginning, the model does not generate a widget directly, but calls a tool similar to visualize:read_me, with very short input parameters:

This step is critical. It shows that before the model really starts to “draw the interface”, it first goes to get a runtime UI specification. In other words, generation is not naked; the model must first know what rules to follow this time.

Step 2: The tool returns a whole design system and streaming constraints

There are several particularly critical sections in this returned content.

First, module description:

Then role definition:

Next are its most critical constraints:

There are also order rules specifically for streaming rendering:

On the surface, this looks like a design specification; but from a runtime perspective, it is more like a generation protocol that “lets the model output stable UI messages”.

It does several things:

Specifies what should appear in the tool and what should appear in natural language

Specifies the order in which code should be streamed out

Specifies which visual effects will destroy the streaming experience, so they are forbidden

Specifies that components must adapt to the host environment, such as CSS variables, dark mode, controlled script capabilities

In other words, this is not “giving the model some aesthetic advice”, but drawing a narrow track for the model.

The real key is not HTML, but the structure of the model output

Then the model starts to call another tool, such as visualize:show_widget. This part of the stream is most easily misunderstood, because it looks like a bunch of fragments:

Looking at such fragments alone, they are almost unreadable. But they are not garbled, but part of the tool call parameters. The host will stitch together the continuously arriving partial_json under the same block, and finally restore a complete JSON.

In this case, after reassembly it looks like:

Every field here is interesting.

title is the identifier of this widget. loading_messages is not decoration, but turns “waiting” into a perceivable generation process. i_have_seen_read_me is like a state confirmation, indicating that the model generated under the premise of having read the spec.

And the real interface is all put into widget_code.

This step reveals the core fact of streaming UI: the model does not directly output the final page, but outputs a UI message that the host can consume.

Why the Generative-UI-MCP project looks small but is valuable

I originally thought that to clone Claude's interactive UI, a lot of things were needed: custom renderer, state management, complete front-end runtime, component library, DSL.

As a result, the core of Generative-UI-MCP is extremely minimal:

A

load_ui_guidelinestool that loads UI generation specs on demandA system prompt resource that injects the most basic output constraints in advance

There is no large and comprehensive component system, nor complex DSL.

This trade-off actually illustrates the problem very well: to clone Claude's interactive UI, the first thing to solve is not “how to render”, but “how to make the model output a structure that the host can stably consume”. Rendering is a later matter.

So this is first a protocol problem, not a rendering problem

Claude's interactive UI has a strong experience feature: the appearance of widgets is stable and predictable. It will not become a code block this time and natural language mixed with HTML next time.

To achieve this, you cannot rely on the model's “self-discipline”, only on protocol.

What Generative-UI-MCP exposes is exactly this layer:

Widgets must be wrapped with a dedicated fence

The fence must contain structured JSON

The

widget_codefield contains HTML or SVGExplanatory text must be written outside the widget block

Multiple widgets must be split into multiple blocks

The output order must be suitable for streaming rendering

These constraints stacked up are essentially already close to a lightweight UI message protocol.

What the host does is essentially route between these messages.

Why the spec must be loaded on demand, not stuffed into the prompt all at once

The clone project splits the UI spec into several modules: interactive, chart, mockup, diagram, art, and loads what is needed.

This is not just to save tokens, but more importantly: different UI types have different constraints.

Charts have chart rules

Forms have form rules

Mockups have mockup rules

Diagrams have diagram rules

Art is another generation method

If you stuff them all into one big prompt, the model will be polluted by many irrelevant constraints. The value of on-demand loading is that only when a certain type of UI is really going to be generated, the model gets that type of rules, and the output is more stable.

This is the same idea as the real stream first passing:

The difficulty of streaming is never “faster”, but “framing while streaming”

Many people's understanding of streaming stays at “display faster”. But from the real output and the clone project, it can be seen that what the host really needs to solve is the parser.

The host cannot just print tokens one by one. It must know:

Whether the current is plain text or a widget block

Whether the current is at the beginning or middle fragment of a block

Whether the JSON is fully closed

When text can be displayed directly

When to enter collection mode

When to hand the complete

widget_codeto the renderer

The whole process is roughly like this:

Claude's feeling of “widgets emerging naturally” is technically not magic, but the parser doing frame-by-frame segmentation while streaming.

Why even the order of <defs>, style, shadows and other details must be managed

When you first see such specs, it is easy to feel that they are too detailed:

In SVG,

<defs>must precede graphicsIn HTML,

stylefirst,scriptlastTry to avoid gradients, shadows, blur

But these rules are not about aesthetics, but about whether every frame the user sees is valid.

The reason is simple:

If

<defs>has not arrived yet and graphics come out first, markers and clipPath will be wrong first and then correctIf

stylearrives too late, the user will first see the naked UI and then see the style suddenly filled inGradients, blur, and shadows are more prone to cross-frame inconsistency during streaming patch

Claude's widget output rarely has obvious jitter, not only because the model is stronger, but also because this set of constraints suppresses the instability of intermediate states.

From a real widget, what kind of front-end this method favors

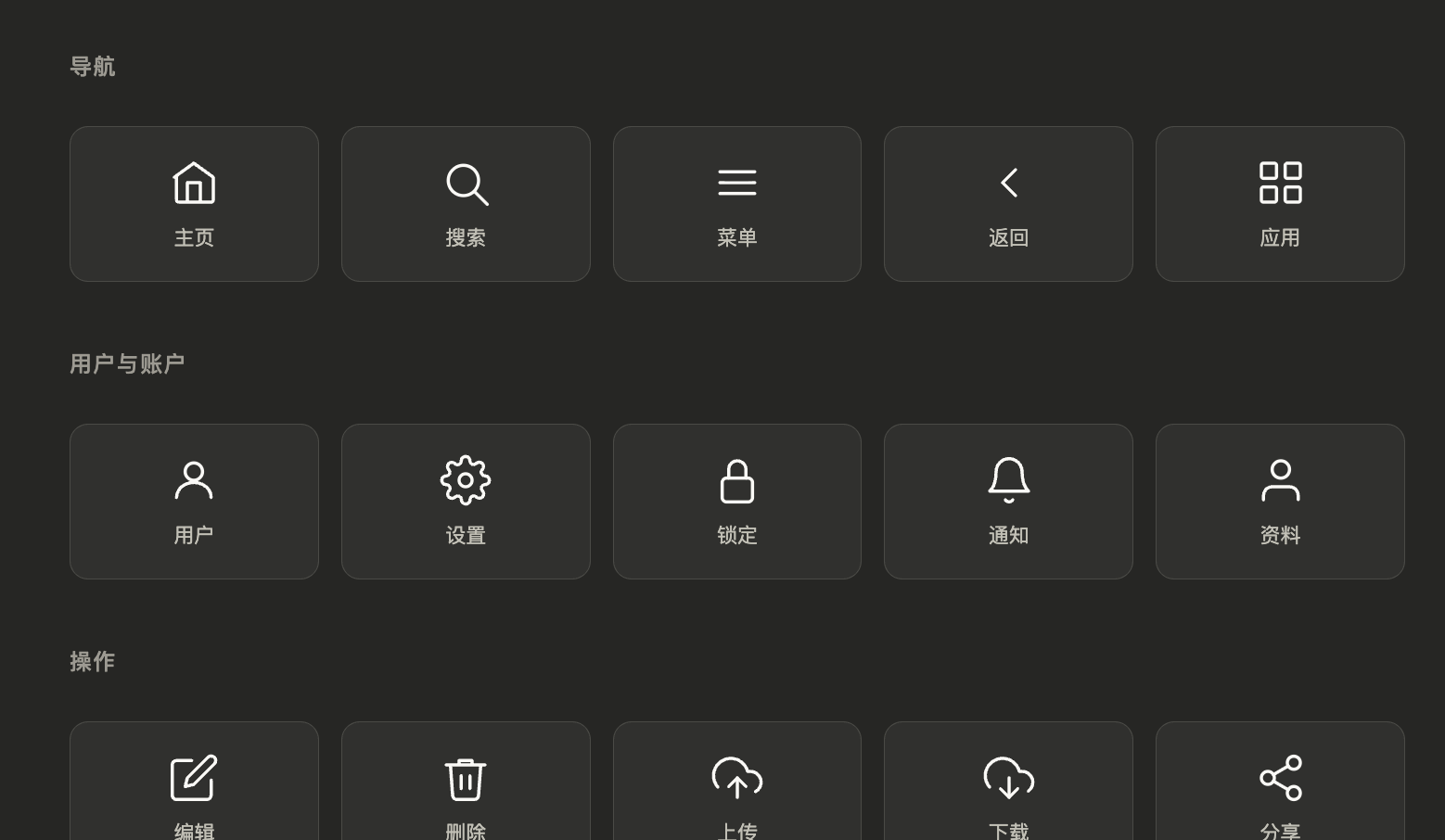

In that real output, the model finally generated an interactive panel of “25 common UI line icons”. It displays icons by category, clicking can highlight, and gives feedback at the bottom.

From the generated widget_code, several very clear trade-offs can be seen.

First, the layout is very simple, the core is a stable grid, rather than complex responsive tricks.

Second, the style is extremely light, all based on the CSS variables given by the host, no hard-coded colors, naturally adapts to dark mode.

Third, icons are directly inline SVG, no image resources, so it is easy to stream output, and easy to switch colors in hover and active states.

Fourth, JS is very short, only does local interaction, no complex state management, no network requests, no framework introduced.

This shows that such streaming UI is more like an “instant interactive shell in conversation”, not a complete front-end application. Complex logic is left to the model, local interaction stays on the front-end.

It is very suitable for:

Icon panels

Comparison cards

Lightweight filters

Small charts

Interactive explainers

Embedded mockups

But not very suitable for:

Super complex business forms

Large multi-page applications

Heavy state back-office systems

Strong real-time collaborative editors

Because its advantage is instant generation, instant embedding, instant interaction, not a long-running large application shell.

In the real stream, there is another important signal at the end: text and UI must divide labor

After the tool finishes rendering the widget, the system returns another prompt:

The value of this prompt is great. It clearly tells the model: what has been rendered, don't repeat it.

The natural language that the model then adds is also very restrained, only doing three things:

Summarize what this widget is

Tell the user how to operate

Prompt the user what else they can ask the model to do next

This and the earlier readme sentence:

form a closed loop.

In other words, Claude-style streaming UI not only “can render widgets”, but also manages the responsibility boundary between text and visuals.

The parts that Generative-UI-MCP can't see are actually the hardest to productize

Honestly, after reading this clone project, it becomes clearer what parts of the original system cannot be filled by protocol alone.

1. Sandbox

The HTML/JS generated by the model cannot run naked. There must be iframe isolation, CDN whitelist, script capability limits, resource permission boundaries.

Otherwise, as long as the model generates a piece of malicious script, the host will have problems.

2. Action protocol

What happens after the user clicks cannot be left to the model to write onclick and freely decide the logic. A mature design is more like the host first defines a unified action schema, such as:

filter_changedsubmit_formrequest_refreshselect_item

The widget only sends actions, the host decides whether to handle locally, call tools, or ask the model again.

3. Incremental patch

Claude updates widgets in multi-turn conversations, often not regenerating the whole block, but partially updating. This requires the host to maintain state, and also requires the model to know when to return a patch and when to return a full replacement.

The biggest gap between demo and product-level experience is probably here.

The one sentence really worth remembering

The biggest takeaway from Generative-UI-MCP is not learning some new trick, but realizing more clearly:

Building interactive UI is never about solving rendering first, but about solving protocol first.

Once the protocol is stable, the rest follows:

Streaming parser

Widget rendering

Sandbox execution

Event reflux

Tool mounting

Incremental update

Claude has basically run through this chain, so it doesn't feel like a demo. Generative-UI-MCP open-sourced the first part of this chain, so for the first time this matter becomes sufficiently understandable, discussable, and dismantlable.

Looking back at those seemingly fragmented streaming snippets, especially the recurring input_json_delta, widget_code, tool_use, they are no longer just noise. They are exactly the traces left by this entire generative UI protocol at runtime.

Appendix: The original prompts that actually appeared in this stream

The following is not a cleaned-up template, but the original prompts and spec text restored from the real streaming output.